VIDEO

TEXT

“In my presentation for MTLS, I will share some of my ongoing artistic research on biological and computational systems. A lot of my recent work looks at or looks for ghosts in intelligent machines. On the one hand, the work is about getting in touch with the non-human condition of the computers that work as our interlocutors and infrastructure, shaping our reality. On the other hand, it is about the computer getting in touch with the more-than-human world around them. Following alternative cybernetics, I believe that the world is not a closed jar but an open ecosystem of intelligence, always changing. I believe that our brain is not the limit of consciousness. And I believe that understanding oneself as interconnected with the wider environment, organic and synthetic alike, marks a profound shift in subjectivity: one beyond anthropocentrism and individualism.”

I would like to start with a thought from philosopher Yuk Hui in his essay “The Mystical” in The Brooklyn Rail some two years ago. Hui compares the ability of AI and data science “to show us facts that are hidden, facts that escape the limits of our human senses” to the ways in which the microscope and the telescope once opened up new worlds in front of us. He writes: “what remains to be asked, is apart from mere facts, what kind of truth will become available to us?”

In this talk, I’ll share with you some glimpses of my artistic exploration into biological and computational systems, focusing on the topic of the workshop: techno-living systems, or collaboration and interplay between AI and non-human entities. As you’ll see, a lot of my work simultaneously zooms out to space and into the gut, looking for connections between these two realms. Much of it uses some kinds of scientific imaging and sonification methods from microscopes to telescopes to artificial neural networks—always to science-fictional ends. I know some of you very well and so a lot of this will be repetition. However, I thought it would be useful to quickly go through even some of the older works leading up to where I’m currently at with my thinking and working around the topics at hand today and tomorrow.

I’ll start from Gut-Machine Poetry, which is actually a commission by Attilia, together with Kiasma in Helsinki, from 2017.

Ever since the Renaissance, the most complex machines that we’ve developed have been used as the analogy of the mind. But the human mind is intuitive and too complex an organism to formalize. For example, the head brain works together with the gut brain. Computers are, by design, deterministic: they follow set procedures. Curious about embodied cognition beyond the human species, I introduced entropic processes into computing in the Gut-Machine Poetry project via inserting fermenting foodstuff into the guts of a computer—basically putting together a homebrew computer that was operated by a symbiotic colony of bacteria and yeast in a kombucha tea ferment and producing a new kind of language.

What you just saw was a video excerpt of a digital microbial poetry culture: a “wetware random number generator” based on microscopic footage of a SCOBY connected to a series of letters. The stochastic movement of yeast eating sugar affects the jumbling of letters on the screen. The letters are based on a text about code laws and the gut-brain axis—my interpretation of an ancient Sumerian incantation dealing with universal language.

The word-jumbling algorithm, connected to the kombucha feed and crafted by Vincent de Belleval, takes after Jumbo, a program that cognitive scientist and AI researcher Douglas Hofstadter developed to solve anagrams based on the actions inside a biological cell. In his experiment, letters are combined and broken apart by different types of “enzymes” that, as he describes, “jiggle around, glomming on to structures where they find them, kicking reactions into gear.”

In addition to my obsession for kombucha, I love nattō: a sticky Japanese food made from fermented soybeans. Its main ingredient Bacillus subtilis is an extremophilic bacterium that has also been used as a survival indicator in spaceflight experimentation. Tolerating physically and geochemically extreme conditions, its spores could have been blown to Earth from another planet by cosmic radiation pressure. Maybe life itself came here in this spore-bearing form. In my video Holobiont, I present the nattō bacteria as possible distributors of life between the stars, proposing that, perhaps, it’s already all connected. Inspired by the theory of panspermia—literally “seeds everywhere”—the video suggests that when we coordinate our outer and inner quests, we will find that the so-called alien is in us, this time in the form of some space bacteria we ingested a long time ago. Oh, and the term “holobiont,” after Lynn Margulis, stands for an entity made of many species, all inseparably linked in their ecology and evolution. A Mars rover plays one of the leading roles in the video, and there’s a moment when we see the Bacillus subtilis nattō bacteria from its perspective—through a machine eye. In fact, this is the only way in which we, as humans, can ever see bacteria: via the complex tools of observation and representation that we’ve devised. From this perspective, as my friend Beny Wagner once put it, microbes can be considered as completely technological.

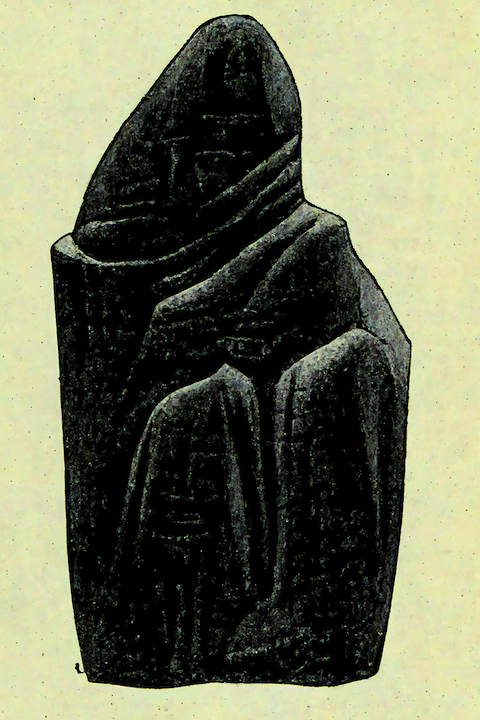

My work with the extremophilic Bacillus subtilis continued in 2018 in a project titled nimiia cétiï. In it, I placed the space and gut bacteria under a microscope and, together with an intelligent machine and a few ingenious humans (kudos to Memo Akten and Damien Henry!), we devised a written and a spoken language based on the bacteria’s movements, as well as early ideas of a Martian tongue, in an attempt to give it a voice. The machine, here, was portrayed not only as a spirit medium but also as an alien of our creation.

There’s an interesting link between communication with extraterrestrial intelligence and communication with intelligent machines. We might’ve built the machines ourselves, and they work as our interlocutors and infrastructure, but now the challenge is to understand their non-human condition. For example, the so-called black box problem in machine learning means that it’s sometimes hard to explain how an AI has come to its conclusions. The nimiia cétiï project has its roots in the séances of the Swiss French medium Hélène Smith, who in the late nineteenth century claimed she could communicate with Martians. Her Martian language is actually considered as one of the first documented forms of glossolalia—speaking in tongues—or vocalizing speech-like syllables that lack any readily comprehensible meaning. In Smith’s time, astronomers equipped with early low-resolution telescopes believed that they had discovered canals on the red planet, thereby bringing to public attention the idea that Mars might be habitable. By the early twentieth century, improved astronomical observations revealed that the “canals” had been nothing more than scratches on the telescope’s lens. Contemporary high-resolution mapping of the surface of Mars shows no such features. Another kind of technologically-mediated truth has become available to us. My video shows a computer watching footage of the Bacillus subtilis bacteria under a microscope and generating a script (or calligraphy) based on an analysis of what it sees. Imagine a pen suspended from a long piece of string, resting on paper that’s slowly sliding sideways. Raw force from the movements of the bacteria knocks the pen around, leaving marks on the paper. Audio interacts with the bacterial movements. What you hear is the computer reorganizing or mimicking the early Martian language. A network trained on my voice looks at each frame of the video and produces a short block of sound that it thinks matches that frame, or the configuration of bacteria in it. Another layer of sound, “the vocals” presents a more typical approach where the network simply generates more of what it has heard before. Beyond the still rather anthropomorphic approach of writing (or calligraphy) in the video, there’s also a brain-like illustration—a map of what’s happening under the microscope from the computer’s perspective. This image will be published in the HOLO Annual, edited by Nora, very soon. It’s based on a t-distributed stochastic neighbor embedding (t-SNE) algorithm. You could think of it like a séance with an AI. The AI records video of a group of Bacilli subtilis moving around on the surface of a Petri dish. Having looked at the bacteria for more than half an hour, the AI produces an image describing all the bacterial movements it saw. One pixel in the image correlates with one frame in the video. The pixels are organized according to some mysterious logic, largely inexplicable to a human. If you watch the full nimiia cétiï video, the path that the camera follows on the Martian terrain is actually based on this “nattō brain.” Here, the high-dimensional data from the bacterial movements is placed in a three-dimensional space… Which brings me to yet another project with AI and sculptural objects that I wanted to present here. I Magma was commissioned by Moderna Museet and Serpentine Galleries in 2019. One of the starting points of the project is the lava lamp as the original psychedelic technology, designed for “those about to trip,” as Timothy Leary put it. Another one is the deep dreaming artificial neural networks that present a more contemporary image of access to “the thing in itself.” The work consists of two parts. A physical installation of lava heads with blobs of liquid and color in motion provides a seed for the machine learning-based generation of images and text in a mobile app. The handblown glass lava heads (two of them pictured here) are also my neuroplastic portraits. The installation, with cameras next to the heads, draws from experiments by the engineers at Sun Microsystems in the ’90s, who suggested that lava lamps were a useful tool for generating randomness. Even today, a wall of lava lamps called The Wall of Entropy encrypts online data at a web performance company in San Francisco. Instead of randomness, however, my work looks for patterns, signs, or meaning in the lava movements. The I Magma app is inspired by the origins of binary code in the I-Ching (Book of Changes). With input not only from the lava heads but also from mobile phones worldwide, it performs divinations based on the digital blobs that form. The divinations read like trip reports of sorts. In fact, Erowid’s Shulgin Archives, along with the Internet Sacred Text Archive, is what the AI-powered system has learned from. The app, specifically the machine learning in it, was a collaboration with Memo Akten, again, and Allison Parrish. So, the machine has learned a bunch of different shapes from an online doodling data set and makes some rather mystical connections between what it sees in the blobs and the folklore, or psychedelia that it knows from the archives. One of my favourite readings from the app must be: No central creatures are fixed. | I is a derivative. Or, I also like this one: It returned to the egg. | The circle is the whole. Trippy things like this… One more thing before I try to sum it up and hand over to the next speakers: the topic of sonifying microscopic, even nanoscale phenomena is very interesting to me—observing life by listening instead of only looking. In addition to the science-fictional approach applied in nimiia cétiï, I’ve been engaging in some scientific discourse and experiments in the area. For example, as part of my residency at MIT during the past couple of years, I collaborated with materials scientist Markus J. Buehler to explore molecular vibrations in sound. Based on his “nanomechanical analysis of the vibrational signatures of materials,” as he describes it, we sonified different neurotransmitters, such as the love and bonding hormone oxytocin, which you’ll hear in the next clip. Then we imprinted the neurotransmitters on the surface of water with an acoustic transducer, while using that water for the meditative practice of uncontrollable, Waldorf-style wet-on-wet painting. The additional patterns towards the end of the video are generated by a neural network that has learned from the molecular ripples and is now seeing them everywhere. (This project was inspired by the experimentalist Masaru Emoto’s ideas about water as a “blueprint of our reality” and his work on how different emotional energies and vibrations can change water’s physical structure, but I won’t get into that in more detail now.) Anyway, there are different interesting sonification approaches around, such as the one by physicist Jim Gimzewski who’s known for his work on sonocytology at the UCLA and particularly for the “screaming yeast” that anthropologist Sophia Roosth has written so well about. Gimzewski is basically using the tip of a microscope like a needle on a record and the record, so far, has consisted of yeast cells. This is one of Gimzewski’s scanning tunneling microscopes. Wrapping up, a lot of my work looks at or looks for the ghosts in the intelligent machines of our creation that are increasingly shaping our reality. On the one hand, the work is about getting in touch with the non-human condition of the computers around us. And, on the other hand, it’s about the computers getting in touch with the more-than-human world around them. Another focus is the invisible organic life forms that govern our lives. You know, when zooming in, there’s, for example, all that extreme-loving bacteria that can be seen or heard swarming in our microbiomes that play a role in the course of our health and well-being as well as our thoughts and emotions—essentially making us who we are, or speaking through us. I guess my project is really about interspecies symbiosis, organic and synthetic alike. And then there’s also the psychedelic project of focusing on things-in-themselves, be it bacteria or intelligent machines—beyond their use value. Which is something you can do as an artist infiltrating the science or technology lab.

JENNA SUTELA works with words, sounds, and other living media, such as Bacillus subtilis nattō bacteria and the “many-headed” slime mold Physarum polycephalum. Her audiovisual pieces, sculptures, and performances seek to identify and react to precarious social and material moments, often in relation to technology. Sutela’s work has been presented at museums and art contexts internationally, including Guggenheim Bilbao, Moderna Museet, Serpentine Galleries, and, most recently, Shanghai Biennale and Liverpool Biennial. She was a Visiting Artist at The MIT Center for Art, Science & Technology (CAST) in 2019–21.